CVE-2023-3269 StackRot

接上文,Eurus复现了StackRot( •̀ ω •́ )✧

Background

vma & maple tree

一块虚拟内存区域用一个vm_area_struct表示:

1 | |

在最开始的设计,vm_area_struct是用rb tree(红黑树)存储的;这个patch series[10]引入了maple tree(一种B树)存储vm_area_struct

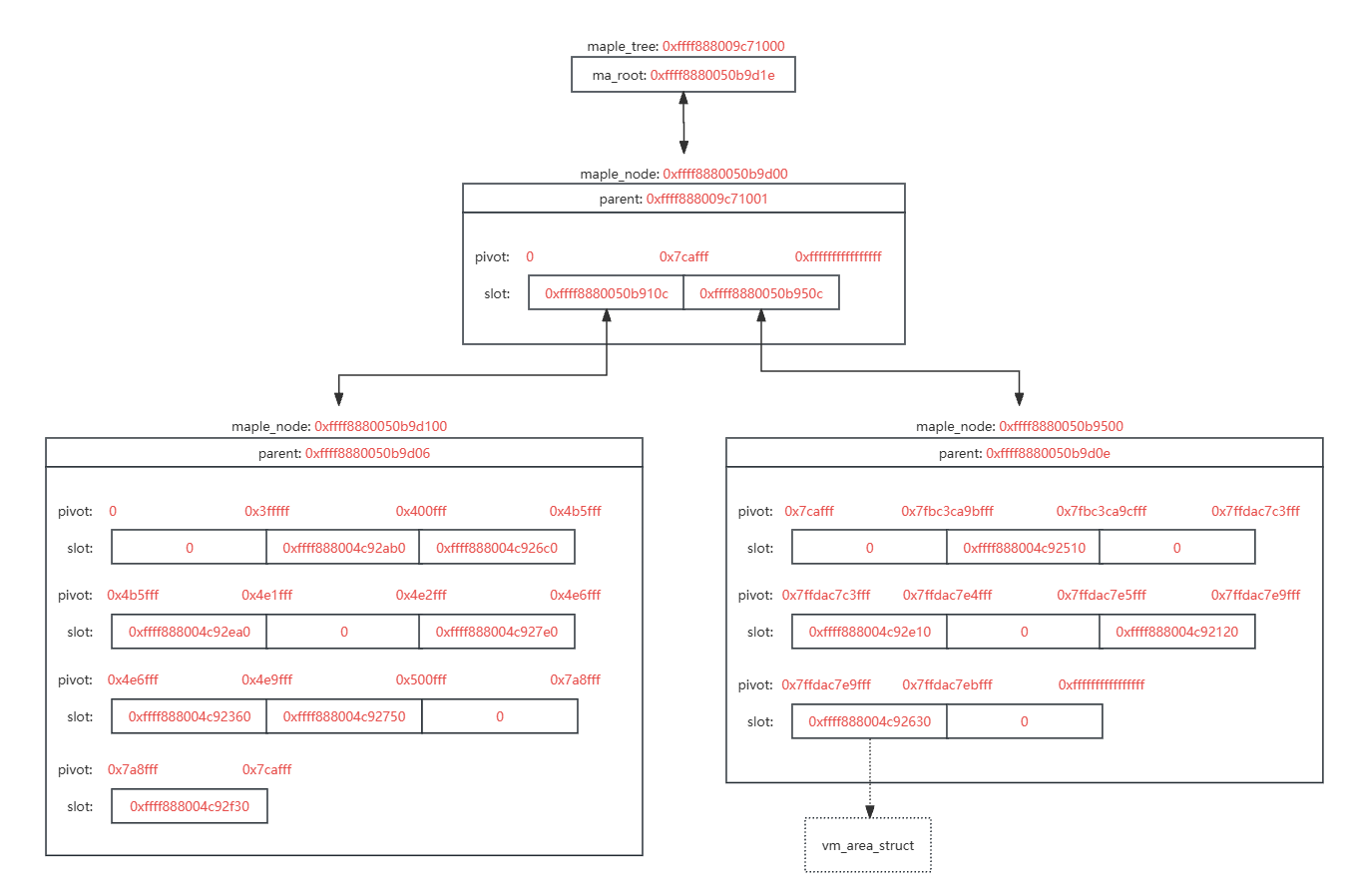

maple_node结点有不同的形式,以下以maple_range_64为例:

1 | |

主要注意两个成员:

- pivot数组:用于存储枢轴,即内存区域的端点

- slot数组:用于存储下一个maple node的指针或者指向vm_area_struct的指针

一棵存储vm_are_struct的maple tree如图所示,最下面一层的maple node slot中的指针都是指向vm_area_struct的:

每个进程的mm_struct存储了本进程的maple_tree

1 | |

注意一下这个mmap_lock,之后会讲到:)

栈扩展

在调用do_user_addr_fault处理页错误的时候存在一种情况用于栈扩展

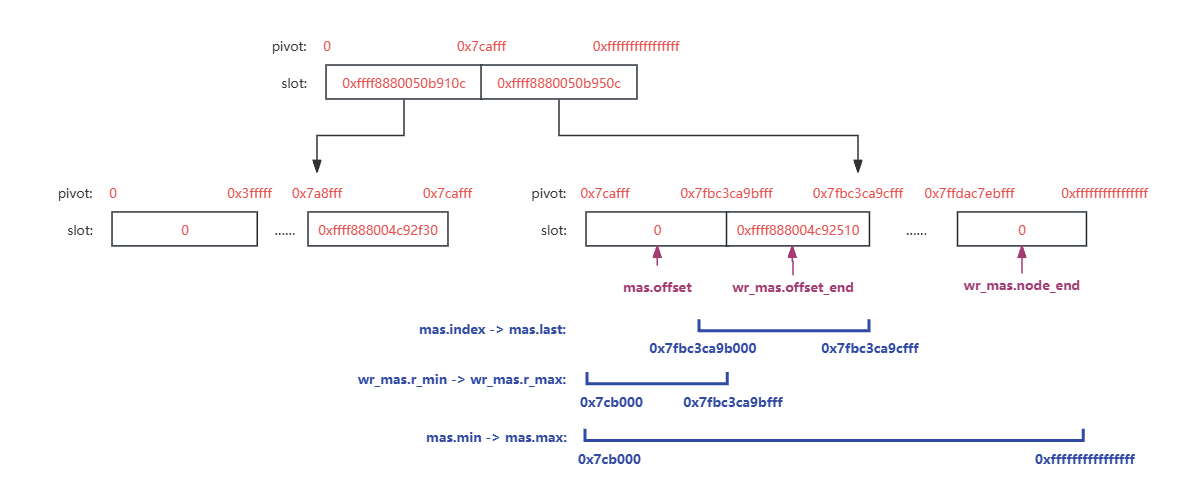

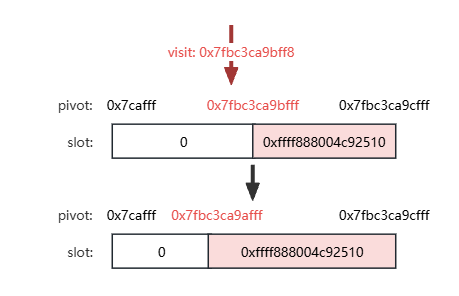

调用find_vma,查找包含给定address的vma或者下一个vma,比如0x7fbc3ca9bff8导致页错误会返回vma 0xffff888004c92510

如果取到的vma有VM_GROWSDOWN标志,那么会调用expand_stack进行栈拓展

1 | |

expand_stack会直接调到expand_downwards

expand_downards

接上文,如果是栈扩展导致的页错误,查找到的vma应该是下一个vma(即栈vma),expand_downwards会先调用mas_prev查找前一个vma,以下这段代码判定两个情况:

- 前一个vma没有VM_GROWSDOWN标志(即不是栈vma),那么栈和前一块内存区域需要存在一段stack_guard_gap

- 前一个vma有VM_GROWSDOWN标志,则不要求存在stack_guard_gap

这段代码暂时不太重要,但和漏洞关系很大,为了讲述顺序提一下

1 | |

之后expand_downwards以以下调用链更改vma maple tree进行栈拓展,其中从vma_mas_store到mas_wr_store_entry的调用(主要是mas_wr_store_entry)是在定位需要更改的maple node,在mas_wr_modify中进行更改

1 | |

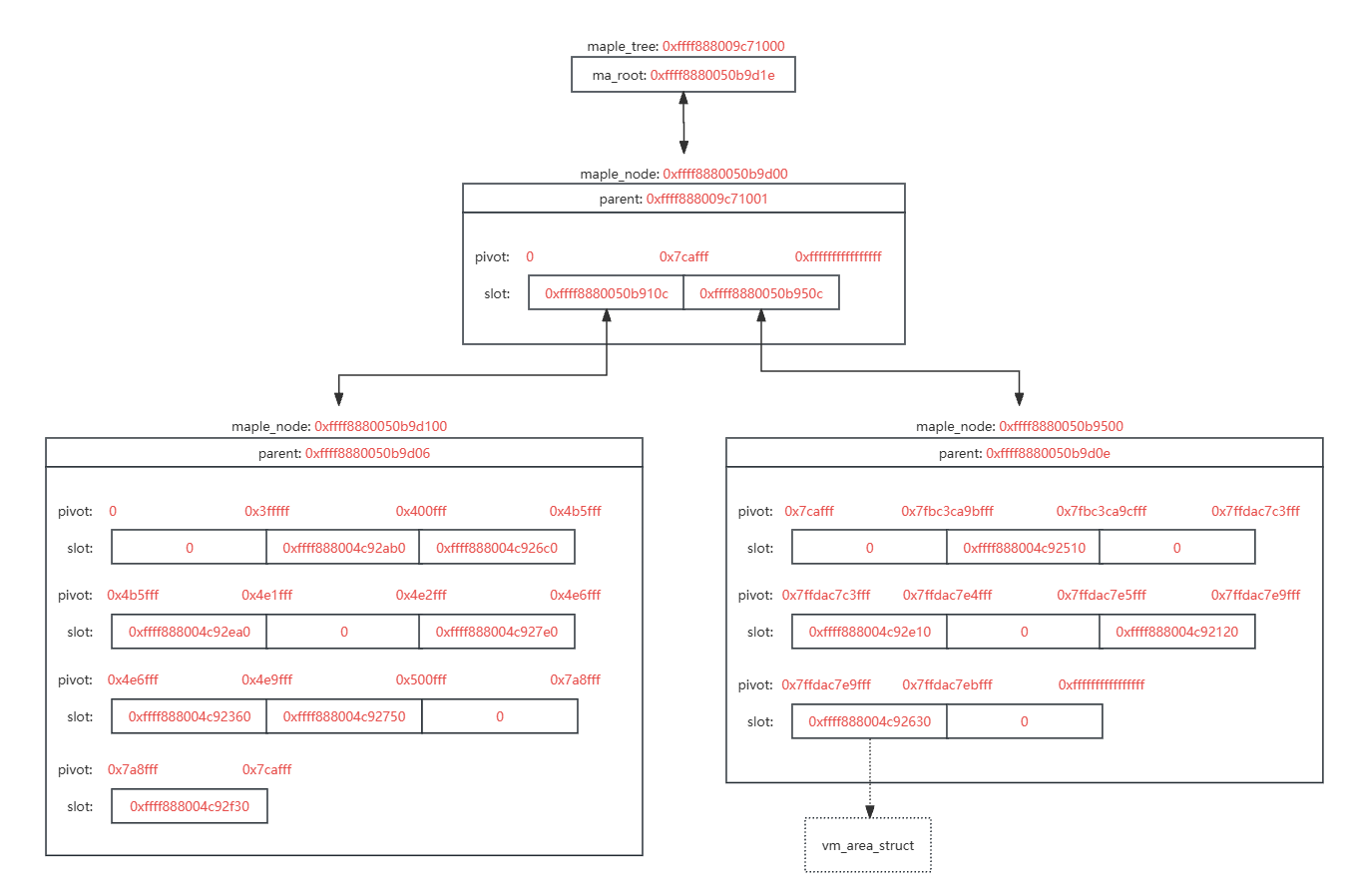

定位主要依靠两个数据结构,ma_state和ma_wr_state

- ma_state

- tree:正在更改的maple tree

- node:正在更改的maple node

- index & last:在当前语境下,表示需要插入的内存区间,以下图为例,访问0x7fbc3ca9bff8导致页错误,要将vma 0xffff888004c92510向下拓展一页

- min & max:当前正在访问的maple node表示的内存区间,图示maple node是[0x7cafff, 0xffffffffffffffff],就是堆以上的部分

- offset:相当于一个“光标”,表示当前结点正在更改的slot / pivot index

- ma_wr_state

- mas:协同工作的ma_state

- r_min & r_max:需要更改的结点的区间,以下图为例就是栈区间

- offset_end:需要更改的最后一个slot / pivot index

- node_end:本结点最后一个有效的slot / pivot index

- pivots:本结点的pivot数组

- slots:本结点的slot数组

- entry:需要写入maple tree的vm_area_struct

- content:要被覆盖的vm_area_struct指针

ma_state vs ma_wr_state:

- ma_state:状态跟踪器,记录了更改模板(index & last),当前结点状态(min & max,offset)

- ma_wr_state:写操作工作台,记录写操作影响的区间(min & max),写操作需要更改的最后一个pivot / slot(offset_end)

1 | |

设定ma_state和ma_wr_state查找定位需要更改的maple node流程如下:

从maple tree的根节点开始walk(mas_wr_walk),查找一个包含ma_state->index的区间,并设定ma_wr_state

在walk更改ma_wr_state的过程中,由于更新ma_state的mas_wr_walk_traverse函数永远少一步,所以最后一个更新的min & max是父结点区间,相当于当前整个结点的区间

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28static bool mas_wr_walk(struct ma_wr_state *wr_mas)

{

struct ma_state *mas = wr_mas->mas;

while (true) {

mas_wr_walk_descend(wr_mas); // go to next maple node

if (unlikely(mas_is_span_wr(wr_mas)))

return false;

wr_mas->content = mas_slot_locked(mas, wr_mas->slots,

mas->offset);

if (ma_is_leaf(wr_mas->type)) // HIT! RETURN

return true;

mas_wr_walk_traverse(wr_mas); // update ma_state

}

return true;

}

static inline void mas_wr_walk_traverse(struct ma_wr_state *wr_mas)

{

wr_mas->mas->max = wr_mas->r_max;

wr_mas->mas->min = wr_mas->r_min;

wr_mas->mas->node = wr_mas->content;

wr_mas->mas->offset = 0;

wr_mas->mas->depth++;

}

mas_wr_modify

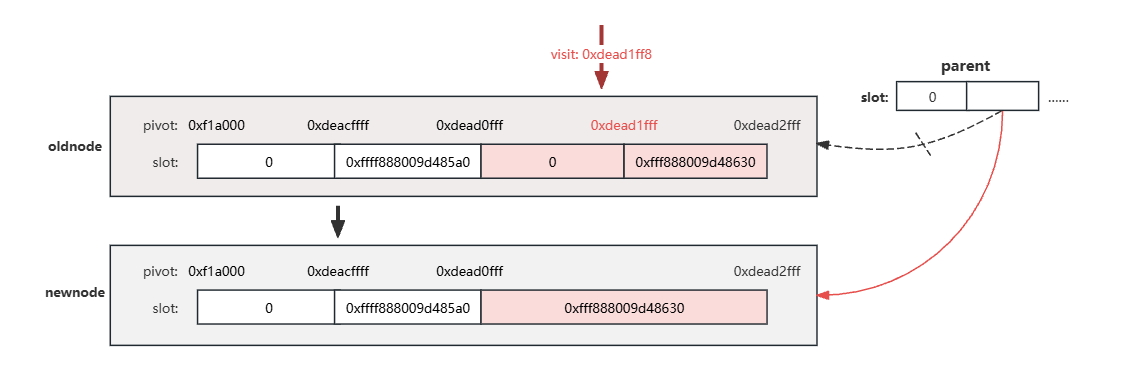

在拓展栈的情形下,根据之前讨论的前一个区间是否为栈区间分两种情况:

S1:前一个区间不是栈区间,那么只需要调整pivot就行,slot的数量没变

S2:前一个区间如果是栈区间,那么访问导致页错误的那个gap区间和后方的栈区间需要合并,slot少了一个

体现在代码上的话,就是mas_wr_modify中S1走上面的分支,S2走下面的分支

1 | |

mas_wr_slot_store只更改了pivot和slot数组的对应项

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22static inline bool mas_wr_slot_store(struct ma_wr_state *wr_mas)

{

struct ma_state *mas = wr_mas->mas;

unsigned long lmax; /* Logical max. */

unsigned char offset = mas->offset;

/* ... */

/* Overwriting a portion of offset and all of offset + 1 */

if ((offset + 1 < mt_pivots[wr_mas->type]) &&

(wr_mas->entry || wr_mas->pivots[offset + 1]))

wr_mas->pivots[offset + 1] = mas->last;

rcu_assign_pointer(wr_mas->slots[offset + 1], wr_mas->entry);

wr_mas->pivots[offset] = mas->index - 1;

mas->offset++; /* Keep mas accurate. */

done:

trace_ma_write(__func__, mas, 0, wr_mas->entry);

mas_update_gap(mas);

return true;

}而mas_wr_node_store由于slot的数量变了,后面的slot和pivot都需要往前移动,所以需要新申请一个maple node,把内容复制过去,并把父结点slot数组的对应指针换掉

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66static inline bool mas_wr_node_store(struct ma_wr_state *wr_mas)

{

/* ... */

newnode->parent = mas_mn(mas)->parent;

dst_pivots = ma_pivots(newnode, wr_mas->type);

dst_slots = ma_slots(newnode, wr_mas->type);

/* Copy from start to insert point */

memcpy(dst_pivots, wr_mas->pivots, sizeof(unsigned long) * (offset + 1));

memcpy(dst_slots, wr_mas->slots, sizeof(void *) * (offset + 1));

dst_offset = offset;

/* Handle insert of new range starting after old range */

if (wr_mas->r_min < mas->index) {

mas->offset++;

rcu_assign_pointer(dst_slots[dst_offset], wr_mas->content);

dst_pivots[dst_offset++] = mas->index - 1;

}

/* Store the new entry and range end. */

if (dst_offset < max_piv)

dst_pivots[dst_offset] = mas->last;

mas->offset = dst_offset;

rcu_assign_pointer(dst_slots[dst_offset], wr_mas->entry);

/*

* this range wrote to the end of the node or it overwrote the rest of

* the data

*/

if (wr_mas->offset_end > wr_mas->node_end || mas->last >= mas->max) {

new_end = dst_offset;

goto done;

}

dst_offset++;

/* Copy to the end of node if necessary. */

copy_size = wr_mas->node_end - wr_mas->offset_end + 1;

memcpy(dst_slots + dst_offset, wr_mas->slots + wr_mas->offset_end,

sizeof(void *) * copy_size);

if (dst_offset < max_piv) {

if (copy_size > max_piv - dst_offset)

copy_size = max_piv - dst_offset;

memcpy(dst_pivots + dst_offset,

wr_mas->pivots + wr_mas->offset_end,

sizeof(unsigned long) * copy_size);

}

if ((wr_mas->node_end == node_slots - 1) && (new_end < node_slots - 1))

dst_pivots[new_end] = mas->max;

done:

mas_leaf_set_meta(mas, newnode, dst_pivots, maple_leaf_64, new_end);

if (in_rcu) {

mte_set_node_dead(mas->node);

mas->node = mt_mk_node(newnode, wr_mas->type);

/* 在mas_replace里替换父节点slot数组的指针 */

mas_replace(mas, false);

} else {

memcpy(wr_mas->node, newnode, sizeof(struct maple_node));

}

trace_ma_write(__func__, mas, 0, wr_mas->entry);

mas_update_gap(mas);

return true;

}问题出现了:)什么问题暂且按下不表

maple tree锁机制

怎么开始写论文了…

在引入maple tree之前,使用rb tree组织vm_area_struct的时候,基本只使用mm_struct的mmap_lock保证并发访问的互斥

- 写操作需要持写锁,调mmap_write_lock

- 读操作需要持读锁,调mmap_read_lock

由于虚拟进程空间(mm_struct)是进程独有的,所以要访问vm_area_struct的rb tree只能通过mm_struct,持有mm_struct的mmap_lock就能保证互斥

1 | |

但由于这种粗粒度的管理,所有vma访问都要争用mmap_lock,导致这把锁的争用非常严重

所以引入了maple tree,减少乃至消除mmap_lock的争用

The first user that is covered in this patch set is the vm_area_struct,

where three data structures are replaced by the maple tree: the augmented

rbtree, the vma cache, and the linked list of VMAs in the mm_struct. The

long term goal is to reduce or remove the mmap_lock contention.[1]

maple tree是RCU安全的,具体来说是slot和pad数组的指针使用RCU保护

1 | |

RCU保证读写的并发,写者需要持有maple tree的ma_lock保证写者之间的并发

The tree can also be put into an RCU-safe mode of operation which allows reading and writing concurrently. Writers must synchronize on a lock, which can be the default spinlock, or the user can set the lock to an external lock of a different type.[2]

1 | |

maple tree提供Normal API和Advanced API,在使用Normal API时不用担心锁的问题,因为API会自己处理持锁问题

You do not have to worry about locking. See Advanced Locking for other options.[3]

The Maple Tree uses RCU and an internal spinlock to synchronise access:

Takes RCU read lock:

- mtree_load()

- mt_find()

- mt_for_each()

- mt_next()

- mt_prev()

Takes ma_lock internally:

- mtree_store()

- mtree_store_range()

- mtree_insert()

- mtree_insert_range()

- mtree_erase()

- mtree_dup()

- mtree_destroy()

- mt_set_in_rcu()

- mt_clear_in_rcu()

举例mt_find:

1 | |

Advanced API提供更好的性能,但与之相同的,用户需要自行处理持锁的问题。Advanced API使用之前提到的ma_state进行状态跟踪,这也是mas前缀的来源

The advanced API offers more flexibility and better performance at the cost of an interface which can be harder to use and has fewer safeguards. You must take care of your own locking while using the advanced API. You can use the ma_lock, RCU or an external lock for protection. You can mix advanced and normal operations on the same array, as long as the locking is compatible.[4]

引入maple tree需要将很多原本使用rb tree的vma查找的API中的rb tree操作替换成maple tree操作

比如find_vma,这里的替换使用的是Normal API mt_find,会自行处理RCU锁[5]

比如find_vma_prev,替换使用的是Advanced API,需要用户自行加锁,所以patch v4开发者评论建议在documentation comments中提出这一点[6]

Additional note: the previous patch for find_vma() mentioned “Using the

maple tree interface mt_find() will handle the RCU locking” while

find_vma_prev() uses the advanced maple tree API, thus IIUC without RCU

locking, and doesn’t add its own. This can easily result in a bug,

especially if the documentation comments don’t mention it at all?v5补充了comments,在使用find_vma_prev之前已经持有了mmap_lock所以不需要RCU lock了

1

2

3

4

5

6

7

8

9

10

11

12

13/**

* find_vma_prev() - Find the VMA for a given address, or the next vma and

* set %pprev to the previous VMA, if any.

* @mm: The mm_struct to check

* @addr: The address

* @pprev: The pointer to set to the previous VMA

*

* Note that RCU lock is missing here since the external mmap_lock() is used

* instead.

*

* Returns: The VMA associated with @addr, or the next vma.

* May return %NULL in the case of no vma at addr or above.

*/

至于为什么明明希望减少mmap_lock的争用,依然不持RCU使用Advanced API,我没查出来 ̄へ ̄

为了减少mmap_lock的争用,后续的Per-VMA locks系列patch[7]提供了更加细粒度的vma锁保护

综上:

- maple tree RCU+ma_lock保证vma的查找并发

- Per-VMA locks保证单个vma的读写并发

两者提供了原本由mmap_lock提供的vma数据结构并发安全,但更细粒度。Per-VMA locks系列patch在page fault处理中通过这种方法绕过了mmap_lock,后续patch在/proc/pid/maps绕过了mmap_lock

漏洞分析

根据maple tree锁机制所述,Per-VMA locks+maple tree RCU & ma_lock能提供mmap_lock提供的vm_area_struct并发安全,但在引入Per-VMA locks之前还需要mmap_lock,因为maple tree只提供vm_area_struct遍历过程的锁保护,不提供vm_are_struct数据的锁保护

所以及时已经引入了maple tree,依然需要使用mmap_lock

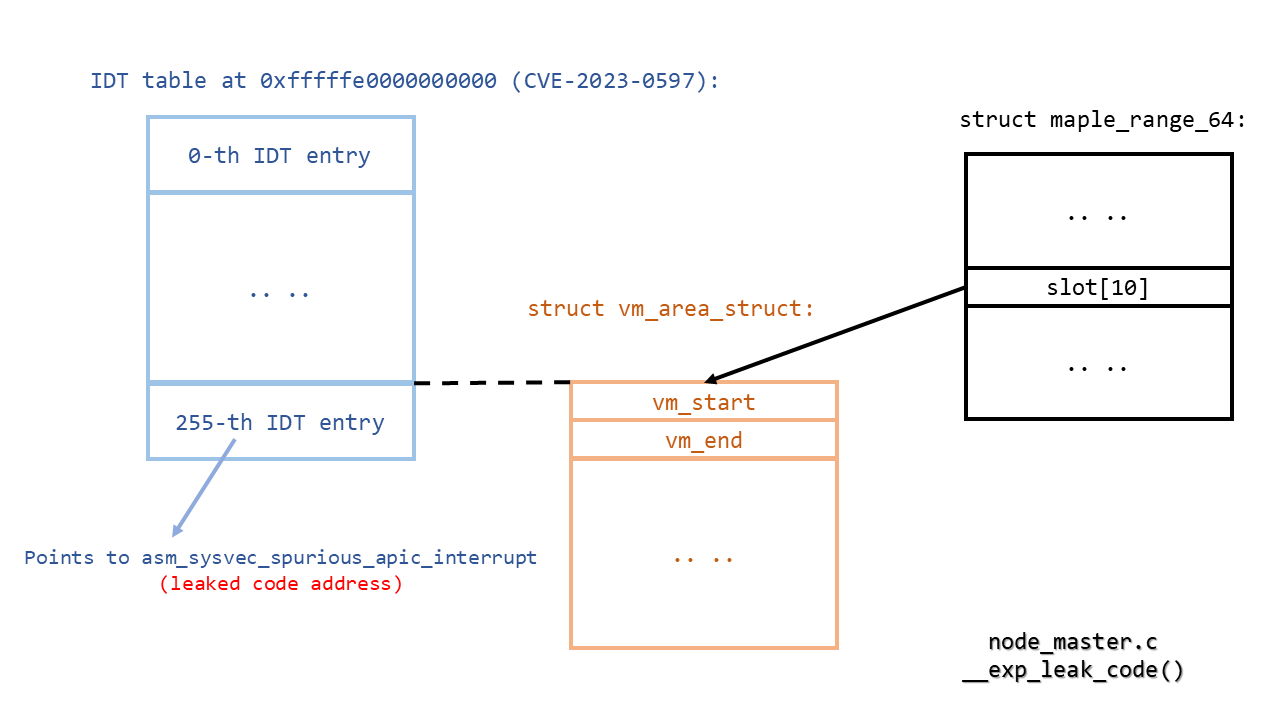

在栈扩展中提到某种情形下需要进行slot合并:释放旧结点、申请新结点、改父节点slot指针,也就是对maple tree进行写操作,但expand_stack没有持mmap_write_lock,而是持mmap_read_lock

虽然理论上来说maple tree RCU能提供遍历过程读写的锁保护,但由find_vma_prev的comments可知,现在并没有完全依赖RCU,部分路径依然依赖mmap_lock,没有做到RCU安全

1 | |

综上对于某些maple tree读路径:

- RCU层面没持rcu_read_lock

- 在expand_stack合并slot情形下写侧没有mmap_write_lock保护

出问题了呢~

这个漏洞可以认为是读侧缺少rcu_read_lock导致的race,也可以是认为是写侧没有持mmap_write_lock导致的race,在修复的时候选择的修复策略是expand_stack加mmap_write_lock[8],因为上面也说过,理论上现在无法提供vm_area_struct数据的锁保护,并且现行的代码策略不倾向于全部路径提供RCU锁保护,所以修复选择加mmap_write_lock

在引入maple tree之前vm_are_struct的遍历完全依赖mmap_lock,但expand_stack这种明显的写路径没加mmap_write_lock其实明显是一个bug,但一直没有出现问题,因为在这种情形下rb tree和maple tree不一样,不需要进行slot的合并,只需要更改vm_start[8]

That is, it was all fine until Ruihan Li pointed out that now that the vma layout uses the maple tree code, we really don’t just change vm_start and vm_end any more, and the locking really is broken. Oops.

漏洞利用

StackRot原文[9]写的很好了,这里就进行一个翻译,加上实操的一些注意事项

写侧就是expand_stack,读侧我们需要找一条遍历路径能够:

- 遍历时间足够长,能够拖到RCU宽限期结束

- 遍历会从vm_area_struct取某些信息返回用户态,用于leak kernel address

- 遍历使用vm_are_struct的一些函数指针,用于劫持PC

选择/proc/pid/maps读取路径

From UAFBR to UAF

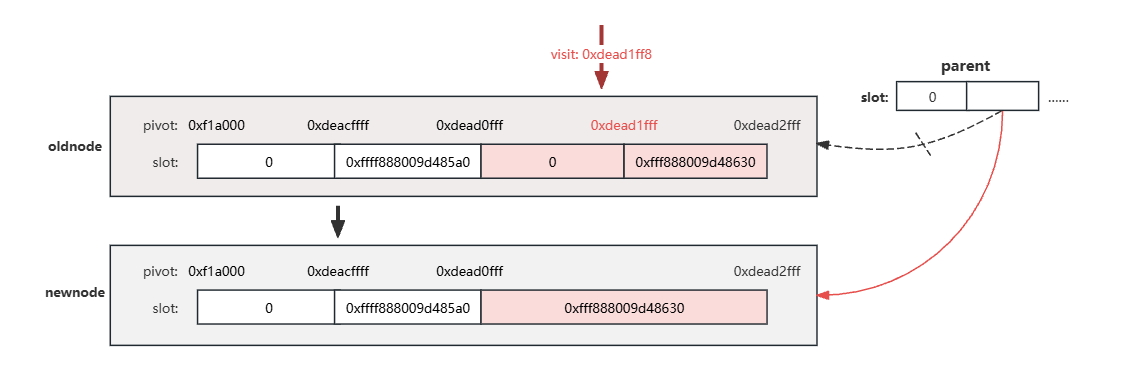

以下是写侧的调用栈,在mas_wr_node_store替换slot指针之前获取到old node指针,在call_rcu free old node之后访问old node就有UAF了,这样就需要解决两个问题:

- 怎么知道old node什么时候free

- 怎么确保读侧在RCU宽限期结束,old node被free之后访问old node

1 | |

synchronize_rcu函数会等到RCU宽限期结束,确保先前的RCU callbacks被唤醒,linux提供系统调用

membarrier(MEMBARRIER_CMD_GLOBAL, 0, -1)最后call synchronize_rcu,这样这个系统调用返回的时候,可以确保old node被free1

2

3

4

5

6

7

8

9

10

11

12

13

14

15SYSCALL_DEFINE3(membarrier, int, cmd, unsigned int, flags, int, cpu_id)

{

/* ... */

switch (cmd) {

/* ... */

case MEMBARRIER_CMD_GLOBAL:

/* MEMBARRIER_CMD_GLOBAL is not compatible with nohz_full. */

if (tick_nohz_full_enabled())

return -EINVAL;

if (num_online_cpus() > 1)

synchronize_rcu();

return 0;

/* ... */

}

}要解决问题2可以有以下几种方式:

遍历任务被抢占,RCU宽限期结束,然后遍历任务再恢复执行,但这需要开启CONFIG_PREEMPT

遍历任务睡眠(比如等待IO),RCU宽限期结束,遍历继续,目前没发现这样的可用路径

遍历任务被中断(比如时钟中断),RCU宽限期结束,但结束RCU宽限期需要进程能处理IPI中断,如果遍历任务已经在中断处理中会处在关中断状态

拖长遍历任务,让RCU宽限期到期,这是最后选择的方案

如果RCU宽限期超过jiffies_till_first_fqs,IPI中断会被发往所有CPU验证是否在RCU临界区,由于遍历没有持rcu_read_lock,被判定不在RCU临界区,触发voluntary preemption,RCU宽限期结束,UAF有了

至于怎么拖长遍历任务,可以mmap一个文件为一个vm_are_struct,在读取/proc/pid/maps时会调用seq_file_path获取文件路径

1 | |

然后以以下调用栈调用到__prepend_path

1 | |

在这个while循环里,只要路径足够深,就能拖很长的时间:)

1 | |

关于UAF窗口

/proc/self/maps的seq_operations如下:

1 | |

其中m_start和m_next都会调用proc_get_vma迭代取下一个vma,其中priv->iter就是一个上文提过的ma_state

1 | |

m_next和m_start调用proc_get_vma的不同在于m_start会将priv->iter的node重置为MAS_START,之后在查找vma时会重新遍历maple tree取第一个node,而m_next不会重置node而是接着之前遍历的结果取下一个node/vma

触发UAF需要连续调用m_next,中途不能有m_start,因为如果重新遍历maple tree那么会取到新的node,ma_state里的UAF指针丢失

所以UAF需要的执行路径是:

m_next记录target node到ma_state

expand_stack调用到mas_replace,替换slot指针为new node,准备rcu释放target node

1

2

3

4

5

6

7

8

9

10

11

12

13static inline void mas_replace(struct ma_state *mas, bool advanced)

__must_hold(mas->tree->lock)

{

// ...

} else {

rcu_assign_pointer(slots[offset], mas->node);

}

if (!advanced) {

mte_set_node_dead(old_enode);

mas_free(mas, old_enode);

}

}show_map打印内存段信息,通过超长目录拖时间达到rcu宽限期,target node被free,然后被用户取到,伪造vma

m_next的node依然是已经free的target node,从中取下一个vma,取到了用户伪造的指针

想要达成这个执行顺序最重要的就是避免调用m_start,需要避免两个问题:

seq_file有一个buffer存储输出内容,如果buffer overflow会中止这轮读取,下轮读取重新申请buffer,再把数据复制过去,这样会导致调用m_start

由于需要拖时间我们需要超长的目录一定会导致overflow,可以在低地址mmap一个更长的目录先扩大buffer,这样之后打UAF的时候就不会触发overflow

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34ssize_t seq_read_iter(struct kiocb *iocb, struct iov_iter *iter)

{

// ...

m->from = 0;

p = m->op->start(m, &m->index);

// ...

while (1) {

size_t offs = m->count;

loff_t pos = m->index;

p = m->op->next(m, p, &m->index);

if (pos == m->index) {

pr_info_ratelimited("buggy .next function %ps did not update position index\n",

m->op->next);

m->index++;

}

if (!p || IS_ERR(p)) // no next record for us

break;

if (m->count >= iov_iter_count(iter))

break;

err = m->op->show(m, p);

if (err > 0) { // ->show() says "skip it"

m->count = offs;

} else if (err || seq_has_overflowed(m)) { // overflow!!!

m->count = offs;

break;

}

}

m->op->stop(m, p);

n = copy_to_iter(m->buf, m->count, iter);

// ...

}每次read系统调用都会调用m_start,所以UAF的过程需要在一次read里完成

关于长目录拖时间

实际测试的时候发现一个现象,似乎真正能拖长时间的实际上是内存写,在这个利用场景下是往seq buffer里写目录,所以增加目录深度实际上是变相增长目录长度,由于过深的目录会严重消耗系统资源,所以可以增加单个文件的长度,比如/a/a/a->/aaa/aaa/aaa来增加目录长度

这个现象在seq buffer overflow的时候尤其明显,因为overflow需要把旧buffer的数据移到新buffer,进行大量的内存写

From slab UAF to page UAF

maple node使用专用slab,需要cross cache,可以fork大量子进程喷maple node,没什么好说的

From UAF to address leaking

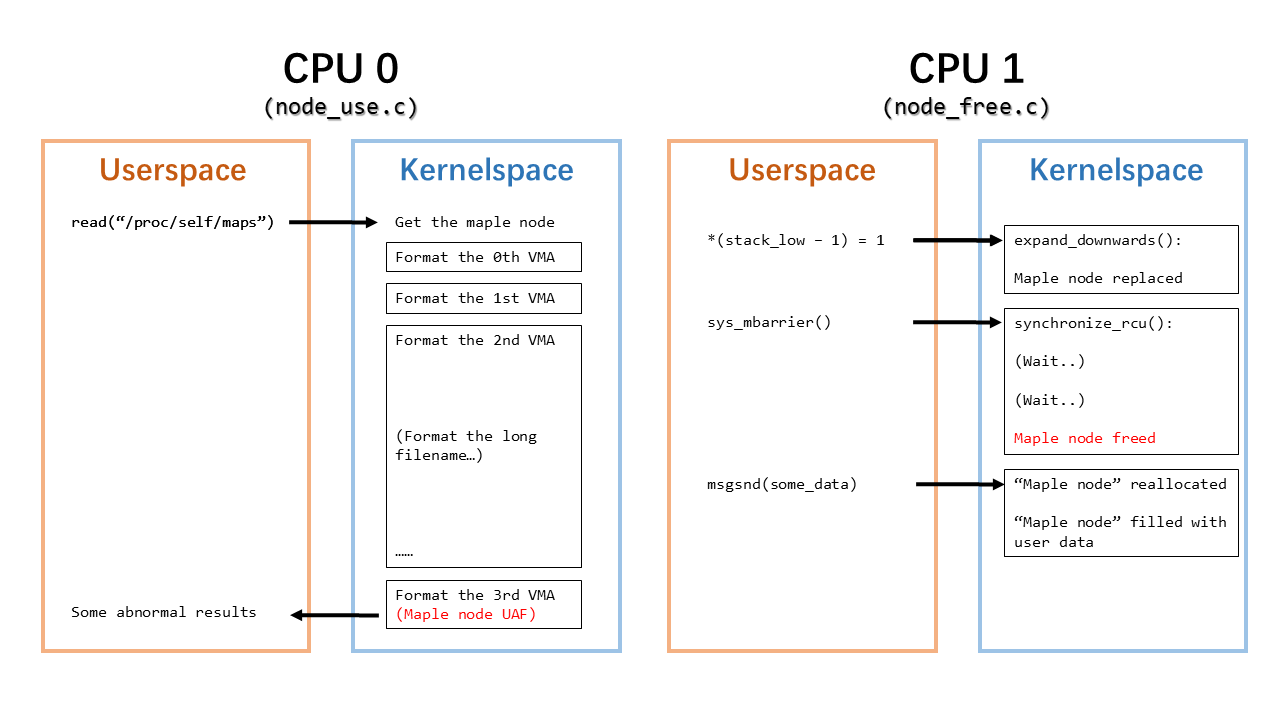

通过伪造maple node的slot指针指向已知地址可以读取地址内容,此时该地址会被视为一个vm_area_struct结构体,show_map_vma会读取vm_area_struct->vm_start和vm_area_struct->vm_end返回用户态

地址内容需要满足某些要求才能被视为一个合法的vm_area_struct,不会再show_map_vma的时候panic:

- vma->vm_mm为空或合法地址

- vma->vm_file为空

- 在泄漏阶段,vma->vm_ops要为空,或者vma->vm_ops->name为空

1 | |

伪造slot需要已知地址,原作者用CVE-2023-0597泄漏内核基址,在泄漏堆地址的时候则使用init_task的tasks双链表泄漏堆地址,这样可以通过喷task_struct再free来保证获得一个地址已知的页来伪造vm_area_struct以及写rop链

但可能是因为我用的自己编的内核,环境可能不太一样,实测的时候如果使用tasks链表泄漏地址,vm_ops和active_mm重合且不为空,active_mm的name的偏移处也不为空,无法满足泄漏要求,所以我用了一种非常不优雅的方式来泄漏堆地址~~~///(^v^)\~~~

ret2dir,mmap大量页喷内存,喷的足够多就能一定程度上保证目标地址可控,且这种leak方法有一定程度上的内存搜索能力,运气好成功率还是可以保证的(但换个环境就不一定了😓)

有空可以研究一下为什么active_mm不空了,画一个饼(

想要更优雅地泄漏需要找到满足以下要求的资源:

- 可喷

- 内核数据段存有指针,或者通过递归读取能获得指针

- 每个读取过程都满足leak条件

我没找到有什么资源满足以上要求,放弃(lll¬ω¬)

From UAF to root privileges

伪造跳转表来ROP,也没什么好说的

Exp

一个写的很丑的exp:(

by一个懒得研究原作者exp选择自己手搓的屑

1 | |

碎碎念

这一个洞从研究到手搓exp断断续续搞了两个星期,折磨但还挺有趣的,以及留下了很多待研究的问题

引用

- https://lore.kernel.org/lkml/20220906194824.2110408-1-Liam.Howlett@oracle.com/

- https://docs.kernel.org/core-api/maple_tree.html#overview

- https://docs.kernel.org/core-api/maple_tree.html#locking

- https://docs.kernel.org/core-api/maple_tree.html#advanced-api

- https://lore.kernel.org/linux-mm/20211201142918.921493-10-Liam.Howlett@oracle.com/

- https://lore.kernel.org/linux-mm/92ef4cae-ccfb-d952-6b36-71036329e8da@suse.cz/

- https://lore.kernel.org/lkml/20230227173632.3292573-1-surenb@google.com/

- https://git.kernel.org/pub/scm/linux/kernel/git/torvalds/linux.git/commit/?id=9471f1f2f50282b9e8f59198ec6bb738b4ccc009

- https://github.com/lrh2000/StackRot

- https://lore.kernel.org/lkml/20220906194824.2110408-1-Liam.Howlett@oracle.com/